Three product releases in five weeks have reframed a basic engineering metric, tokens per second, as the defining competitive axis in AI-assisted software development.

Cursor shipped Composer 1.5 on February 10, a proprietary model built on 20x-scaled reinforcement learning. Two days later, OpenAI released GPT-5.3-Codex-Spark in partnership with Cerebras, and on March 5 it followed with GPT-5.4, its most token-efficient reasoning model to date.

Each release made a distinct bet on what speed means and how to achieve it.

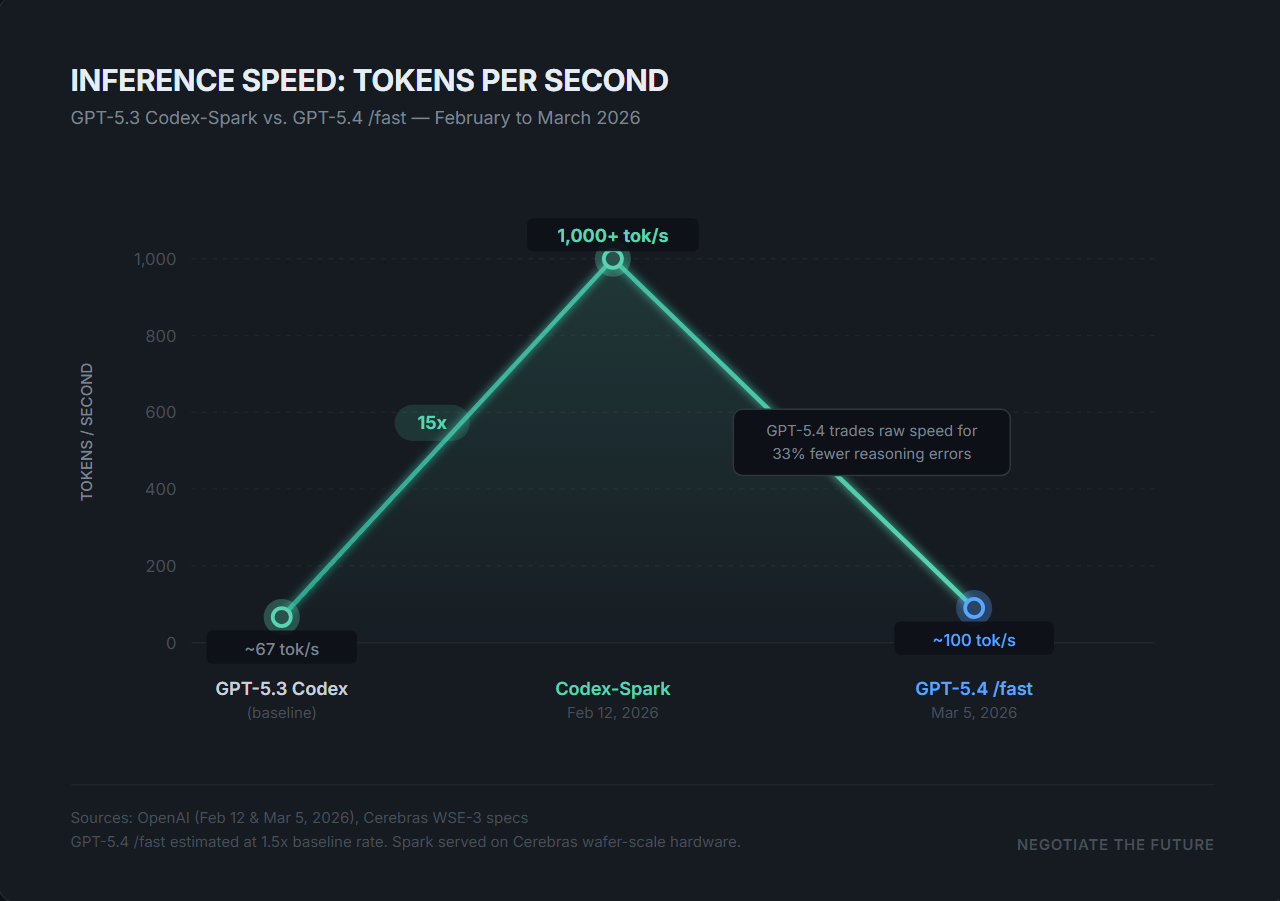

Codex-Spark is the most literal interpretation: raw throughput on specialized hardware. The model generates over 1,000 tokens per second when served on Cerebras' Wafer Scale Engine 3, a chip containing 4 trillion transistors and 900,000 AI-optimized cores. That rate is roughly 15 times faster than the flagship GPT-5.3-Codex.

OpenAI reduced time-to-first-token by 50 percent and per-roundtrip overhead by 80 percent, gains achieved through persistent WebSocket connections and a rewritten inference stack. The speed comes at a cost. On SWE-Bench Pro, Spark scores 56 percent compared to 56.8 percent for the full Codex model, and the gap widens on Terminal-Bench 2.0, where Spark drops to 58.4 percent against the flagship's 77.3 percent.

OpenAI released Spark as a research preview for ChatGPT Pro subscribers, positioning it as a tool for interactive development rather than autonomous engineering.

GPT-5.4, released three weeks later, defines speed differently. Rather than increasing raw token velocity, the model reduces the number of tokens required to reach a correct answer. OpenAI reported that GPT-5.4 is 33 percent less likely to make errors in individual claims compared to GPT-5.2, while using significantly fewer reasoning tokens overall.

Its fast mode in Codex delivers up to 1.5 times faster token velocity. The model also introduced native computer-use capabilities and a one-million-token context window.

Cursor Composer 1.5 represents a third approach entirely. Released by a company valued at $29.3 billion since its November 2025 Series D, the model was built by scaling reinforcement learning 20 times beyond its predecessor, with post-training compute exceeding the amount used to pretrain the base model. Composer 1.5 introduces adaptive thinking that calibrates reasoning depth to task complexity and a trained self-summarization mechanism that maintains accuracy as context overflows. The model is priced at $3.50 per million input tokens and $17.50 per million output tokens.

The convergence on speed reflects a market that has matured past the question of whether AI can write code. Cursor crossed $2 billion in annualized revenue in February 2026. OpenAI's partnership with Cerebras, valued at over $10 billion, commits 750 megawatts of wafer-scale systems to inference serving alone.

These are infrastructure-scale commitments predicated on a specific theory: that the constraint on AI coding adoption is no longer capability but latency. Codex-Spark trades accuracy for interactive speed, GPT-5.4 preserves accuracy by compressing reasoning, and Composer 1.5 uses reinforcement learning to make an agent faster at choosing what to do next.

Each model answers the same question with a different definition of what "fast" means.